And the armor became the weight that drowns you.

For four hundred years, the formula was simple. Build the biggest navy, control the chokepoints, control the world. Spain did it. Portugal did it. England perfected it. America inherited and expanded it. Trillions of dollars of installed military infrastructure organized around a single strategic insight: whoever commands the narrow passages where goods must flow commands everything.

Two weeks ago, Iran closed the Strait of Hormuz with missiles and drones. The United States Navy, the most dominant naval force in the history of the world, would not enter the strait to reopen it. Not couldn’t. Wouldn’t. Because the calculus had changed. Cheap missiles and cheap drones had turned the chokepoint into a kill zone for the very ships designed to control it.

The comfortable version of this story is that it was a temporary tactical decision, a one-off situation in a messy regional conflict. The uncomfortable version, the one that defense strategists are whispering to each other but not yet saying on camera, is that this was a Suez moment. The moment the old architecture of power revealed itself as legacy infrastructure.

This isn’t a military essay. This is a business essay. Because the same pattern that just played out in the Persian Gulf is playing out in your company right now, and most executives can smell the smoke but can’t name the fire.

The Pattern

Every major disruption in the last thirty years follows the same structural logic. An expensive, centralized system that maintained power by controlling chokepoints gets routed around by a cheap, distributed alternative. And every time, the incumbents run the same playbook of denial. The same confident assurances delivered in the same reassuring tones by the same people who will later claim they saw it coming all along.

The music industry had it figured out. A handful of record companies controlled the A&R agents, the radio station relationships, the distribution networks, and the retail shelf space. If you were a musician, you needed their infrastructure. There was no other path from garage to audience. Then the internet dissolved every chokepoint in that chain simultaneously. You didn’t need the A&R guy. You didn’t need the radio DJ. You didn’t need the record store. You needed to know how to play. As macro strategist Luke Groman recently put it: the US Navy is the record company. Iran’s drones are the kid with a SoundCloud account.

Bitcoin did the same thing to monetary chokepoints. For centuries, if you wanted to move value across borders, you needed to pass through the banking system, which meant passing through jurisdictions, which meant passing through the political interests of whoever controlled those jurisdictions. The entire architecture of monetary control rested on chokepoints: correspondent banks, clearinghouses, SWIFT. Satoshi didn’t attack those chokepoints. He made them irrelevant. A protocol replaced an institution. You don’t petition the chokepoint for permission anymore. You route around it.

During the recent Middle East crisis, that wasn’t theoretical. People were paying $350,000 for a private jet out of the UAE. If you needed to get $40 million out with you, gold means extra luggage fees and some very uncomfortable conversations with customs agents. Bitcoin means a thumb drive in your pocket. In those moments, Bitcoin wasn’t acting as a risk asset correlated to the NASDAQ. It was acting as exactly what it was designed to be: value transfer that doesn’t need anyone’s permission or anyone’s navy to protect the shipping lane.

The internet democratized information. Bitcoin democratized money. Missiles and drones democratized warfare. And now AI is democratizing the one thing that was supposed to be unchallengeable: the organizational capacity to coordinate complex work at scale.

Same pattern. Same denial. Same outcome. The only variable is how long it takes the people running the expensive centralized system to realize the game changed while they were polishing the brass on the flagship.

Why Companies Exist (And Why That Reason Is Evaporating)

In 1937, Ronald Coase asked a question that economists had somehow never bothered with: if markets are so efficient, why do firms exist at all? Why don’t we all just contract with each other as independent agents in an open marketplace?

His answer was transaction costs. It’s cheaper to bring people under one roof and coordinate them internally than to negotiate, contract, monitor, and enforce agreements with outside parties for every single function. The firm is a transaction cost arbitrage. You accept the overhead of organizational structure because the alternative, managing thousands of external relationships, is even more expensive.

This was a perfectly elegant explanation. It also described a world where the roof mattered.

Every wave of democratization has been eroding that arbitrage. The internet made the roof irrelevant for information sharing. Cloud computing made it irrelevant for infrastructure. Remote work made it irrelevant for physical presence. Each wave shaved off a piece of the Coase rationale, but the firm held together because one cost remained stubbornly high: the cost of coordinating commitments between people.

That’s the cost AI is about to collapse.

The Commitment Network

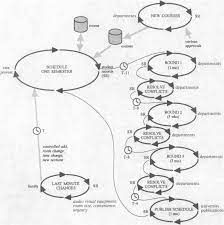

Fernando Flores, working from the philosophy of language and speech act theory, laid out a framework in the 1980s that most of the business world has never encountered but that describes how organizations actually function more accurately than any org chart ever drawn.

Organizations don’t run on hierarchy. They don’t run on processes. They run on networks of human commitments. Every time someone says “I’ll have that to you by Friday,” that’s a promise. Every time someone says “we need to enter this market,” that’s a declaration. Every time someone says “can you take this on?” that’s a request. Every time someone says “that approach won’t work,” that’s an assessment. The entire living tissue of an organization is these speech acts being made, tracked, fulfilled, broken, and renegotiated across thousands of nodes every day.

Middle management exists primarily as commitment routing infrastructure. The project manager checks whether promises got kept. The VP makes assessments about which requests to authorize. The compliance officer verifies that declarations match reality. The department head translates executive declarations into operational requests. Layers and layers of people whose actual function, underneath the job titles and the meeting invites, is maintaining the commitment network.

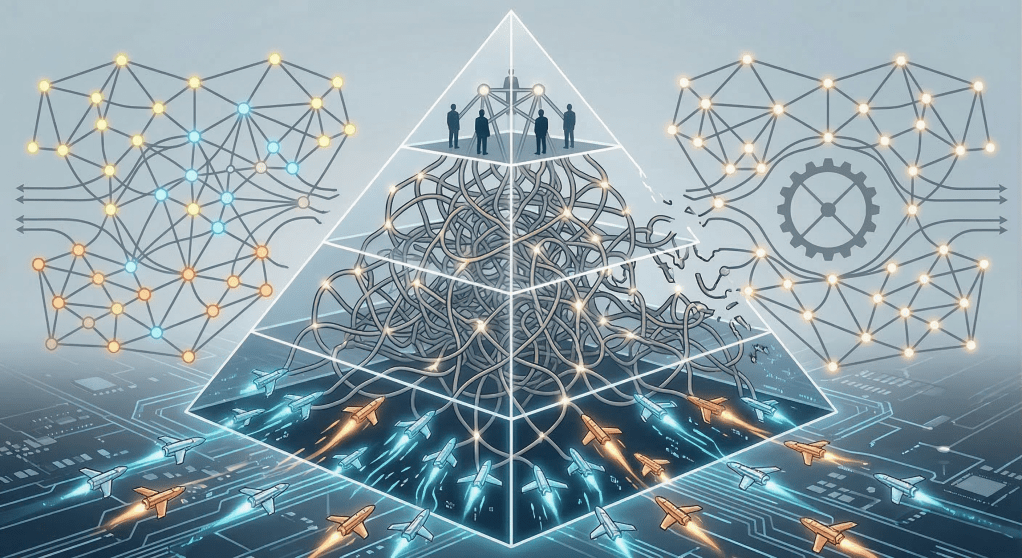

This is the organizational equivalent of the carrier battle group. Expensive. Powerful. Built for a world where coordinating commitments at scale required massive human infrastructure. And about as ready for what’s coming as a guided missile cruiser in a strait full of cheap drones.

What AI Agents Don’t Do

Here is where most of the conversation about AI in the enterprise goes wrong, and it goes wrong in a way that matters enormously.

AI agents don’t make commitments. Not in any meaningful sense. A commitment requires a self that can be held accountable, that understands the social weight of a promise, that can renegotiate in good faith when conditions change. When a human says “I’ll have that to you by Friday,” they’re putting their reputation, their relationships, and their standing in the network on the line. An AI agent that completes a task by Friday has done something that looks like fulfillment but carries none of that social weight. There is no reputation at stake. There is no relationship to preserve. There is no judgment call about when to renegotiate versus when to push through. Nobody’s taking the AI out for a beer to smooth things over after a missed deadline.

This distinction matters because it reveals what AI actually disrupts. The conventional framing asks which tasks AI can perform or which commitments AI can fulfill. Both framings assume the commitment network stays intact and AI simply occupies nodes within it, like a new employee filling a vacant desk. That’s the CIO framing. That’s the governance-and-scaling framing. And it’s wrong.

AI agents don’t replace nodes in the commitment network. They eliminate the need for entire commitment chains to exist at all.

Think about what that means. In the current organizational architecture, you need massive human infrastructure to route commitments because commitments are expensive. Making a request costs social capital. Tracking a promise requires attention and judgment. Assessing whether a declaration was honored requires context and trust. Renegotiating a broken commitment requires relationship skill. All of that friction is why you need layers of humans managing the network.

When an AI agent can execute work that previously required a request to be made, a promise to be given, fulfillment to be tracked, and quality to be assessed, that entire commitment chain doesn’t get automated. It vanishes. The request was never made. The promise was never given. The tracking never happened. The assessment was never needed. The chain of human commitments that existed to coordinate that work simply ceases to exist. Poof. Like it was never there. Which, if you think about it, is kind of the point.

And that’s far more disruptive than replacement. When you replace a node in a commitment network, the network adapts. Someone new fills the role, learns the relationships, picks up the obligations. The network flexes and continues. But when you eliminate the need for entire chains of commitments, the network contracts. And as it contracts, the roles that existed primarily to route, track, and manage those commitments lose their reason to exist. Not because AI took their tasks, but because AI made the coordination that justified their presence unnecessary.

This is why the 2008 analogy is so uncomfortably apt. In February of 2008, 92.7% of American mortgages were current. Seven months later the financial system nearly collapsed. You didn’t need every mortgage to go bad. You needed enough of them to go bad that the system built on top of them couldn’t absorb the shock. The same is true of commitment networks. You don’t need AI to eliminate every commitment chain in the organization. You need it to eliminate enough of them that the management layer built on top of those chains can’t justify its cost structure. And nobody is measuring that. Nobody is even looking at it. They’re too busy running pilots.

The Eighth Graders in the Garage

Groman tells a story about eighth graders in a garage who are going to put a $10 billion company out of business. He first said it in October of last year. By February, software-as-a-service was getting gutted by vibe coders, and the prediction was already playing out. Sometimes the future has the decency to show up on schedule.

Those eighth graders aren’t just writing code. They’re operating without a commitment network entirely. There are no requests to route, no promises to track, no management layers to assess quality. Two people and a stack of AI agents, and the work gets done. Not because the agents are making commitments on their behalf, but because the work no longer requires the human coordination infrastructure that commitments exist to manage.

They don’t need the carrier group. They don’t need the record company. They don’t need the Coase rationale. Their transaction costs for coordination are approaching zero, which means they don’t need to bring anything under one roof, which means they don’t need the roof. They probably don’t even have a roof. They’re in a garage. That’s the whole point of a garage.

The $10 billion company they’re about to displace has thousands of people maintaining a commitment network that grew organically over decades. Most of those commitments are habitual rather than intentional. Nobody audited them. Nobody asked whether they still serve the organization’s actual purpose or whether they’re just the scar tissue of decisions made by people who retired in 2014. The network calcified into an org chart, and the org chart became the thing everyone optimized around, and now the CIO is being told to inject AI into the org chart to make it more efficient.

That’s optimizing the carrier group while the nature of warfare changes underneath you. It’s like putting a really nice sound system on the Titanic. Great acoustics. Shame about the iceberg.

The Question Nobody Is Asking

Read any CIO publication right now and the conversation is almost entirely about governance, pilots, ROI measurement, and scaling AI experiments within existing structures. McKinsey says only 10% of businesses have scaled AI agents across any business function. Industry estimates say 70 to 95% of AI pilots never get off the ground. The entire enterprise conversation is organized around one question: how do we get AI to work inside our existing structure?

That’s the wrong question. It’s the naval equivalent of asking how to make carrier groups more efficient while the nature of the chokepoint itself has changed.

The prior question, the one that should be keeping executives awake, is this: does the existing structure have a reason to exist in its current form? If democratization and distribution are dissolving the economic rationale for the firm as Coase defined it, then what exactly are you governing? What are you scaling? What are you measuring ROI on?

If the commitment network is the only thing actually holding the organization together now that the roof doesn’t matter, the infrastructure doesn’t matter, and the coordination costs are collapsing, then the real diagnostic isn’t about technology adoption at all. It’s about examining which commitment chains in your organization still need to exist, and which ones are legacy infrastructure maintained by habit and org chart inertia, waiting to be made irrelevant by two kids in a garage who never needed them in the first place.

The question for the CEO is not “which tasks can AI do?” It’s not even “which commitments can AI fulfill?” because AI doesn’t make commitments. The question is: which commitment chains in my organization become unnecessary when the underlying work no longer requires human coordination to execute? How much of my management structure exists to route, track, and assess commitments that AI agents will simply cause to never be made?

That’s a fundamentally different diagnostic than anything in the CIO literature right now. And it’s the one that determines whether your organization is the navy that adapts to the new reality or the carrier group that sails into the kill zone convinced that size and expense still equal power.

Two Kinds of Authority

There’s one more layer to this that most people miss, and it matters for understanding what actually holds together when everything else dissolves.

There are two fundamentally different kinds of authority operating in any commitment network. The first is external: rule of law, contracts, regulatory frameworks, the legal infrastructure that makes commitments enforceable between parties who don’t trust each other. This is the authority Coase was implicitly relying on. Firms could exist because courts could enforce contracts and protect property. And notably, this is exactly the kind of authority that Bitcoin replaces in the monetary domain. Trustless enforcement through protocol. You don’t need the legal chokepoint to guarantee the commitment because the mathematics guarantee it.

The second kind of authority is internal: the declared power to set direction, allocate resources, make requests that carry weight within an organization. When a CEO says “we’re pivoting to this market,” that’s a Floresian declaration. It restructures the entire commitment network downstream. Every promise, every request, every assessment now has to realign. This authority isn’t enforced by courts. It’s enforced by shared understanding, culture, legitimacy. And it’s far more fragile than most leaders realize, because it depends on the network actually existing to carry the signal. A declaration that nobody routes is just a memo nobody read.

The democratization wave is pressuring both simultaneously. External authority structures get disintermediated by protocols and platforms. Internal authority structures get hollowed out as AI agents eliminate the commitment chains that gave middle management its function and its legitimacy.

What remains when both forms of authority are under pressure? Only the commitments that genuinely require human judgment, human accountability, and human relationship to function. The declarations that set direction. The assessments that require wisdom, not data. The promises where reputation and trust are the actual mechanism of enforcement. The requests that carry moral weight because a person is asking another person to take something on.

Those commitments are the irreducible core of the organization. Everything else is coordination infrastructure. And coordination infrastructure is what democratization destroys. Every time. In every domain. Without exception.

The Smoke Is in the Building

The pattern is always the same. The expensive centralized system denies the threat. The Chinese just make cheap stuff. AI still hallucinates. Bitcoin is too volatile. Drones can’t really threaten a carrier group. They’re right for a while. Then they’re not. And the transition from “right for now” to “catastrophically wrong” happens faster than anyone inside the incumbent structure can process. It always does. That’s not a prediction. That’s a pattern you could set your watch to if you still wore a watch, which you don’t, because your phone killed that industry too.

AI is hollowing out white-collar employment at a pace governed not by how fast you can build factories but by how fast you can connect computers. The Rust Belt lost 35% of its manufacturing jobs to China over decades because displacement was gated by the speed of building physical plants. AI displacement has no such governor. Connect a couple of computers. Boom. Gone. And the people who lose those jobs aren’t living debt-free. They have mortgages and car payments and credit cards and kids in college and a lease on a Range Rover they probably shouldn’t have signed in the first place. When enough of them stop paying, the credit system cracks. And we’ve seen this movie before. It doesn’t end with everyone keeping their house.

But the organizational version of this plays out on its own timeline, and it’s already underway. Every commitment chain that AI makes unnecessary is a thread pulled from the organizational fabric. Pull enough threads and the garment doesn’t just get thinner. It loses its shape entirely. The question isn’t whether this is coming. The question is whether you’re examining your commitment network now, while you still have the ability to redesign it intentionally, or whether you’re waiting until the eighth graders in the garage have already made the question academic.

The smoke is in the building. The question is whether you’re willing to name what’s burning.

* * *

Principal, Brian Connelly Consulting

Organizational Readiness for the Age of Agentic AI

brianconnelly.com

Leave a comment