Building a God with a Factory Handbook

We’re building something that acts on its own. The instruction manual still assumes someone’s at the controls.

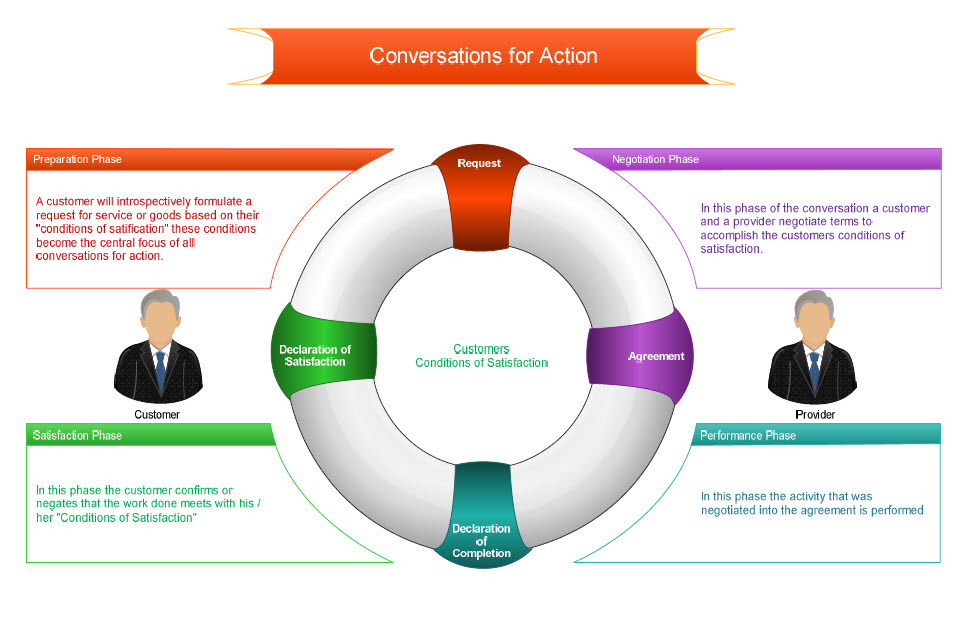

In 1986, a Chilean philosopher named Fernando Flores released a piece of software called The Coordinator. It was built on a genuinely brilliant insight: all work is conversation. Every task in every organization begins with someone making a request, someone else making a commitment, someone doing the work, and someone declaring it satisfactory or not. Request, promise, perform, close. That’s the loop. That’s all there is.

Flores didn’t come up with this framework on a whiteboard in a nice office. He’d spent three years in one of Pinochet’s prisons after the 1973 coup, thinking about how human coordination works and how it breaks. Before that, he’d been Chile’s Finance Minister at age 30, running one of the most ambitious cybernetic management experiments in history. The man had seen commitment networks built, destroyed by state violence, and rebuilt from scratch. He understood what was at stake when people’s promises to each other stopped being honored.

So he built The Coordinator. And the people who had to use it called it “Naziware.”

The nickname is revealing, and not for the reason you’d think. Yes, the interface was rigid. You had to classify every message as a specific type of speech act before the system would send it. Was this a request? A promise? A declaration? But the interface wasn’t really the problem. The problem was what happened after you classified your message. When you labeled something as a promise, the system tracked it. When someone made a request and you committed to fulfilling it, there was now a record. The loop had to close. You couldn’t let things quietly slide. You couldn’t pretend the conversation never happened.

That terrified the people who ran organizations. Because let’s be honest about what corporate middle management actually is. It’s not coordination. It’s not leadership. It’s the fine art of appearing to commit while retaining the option to have been talking about something else entirely. The entire operating system runs on strategic ambiguity. You don’t promise, you “align.” You don’t commit, you “take it offline.” You don’t deliver, you “circle back.” You hold meetings that exist for the sole purpose of producing no actionable outcome so that when nothing gets done, the failure is distributed across a conference room like secondhand smoke. Nobody inhaled.

Flores looked at this elaborate theater of non-commitment and said: I see what you’re doing, and I’m going to make it impossible. And then he did. And they called it Naziware, because the only thing more offensive than forcing a middle manager to keep a promise is suggesting they made one in the first place.

The Coordinator died. Not because the philosophy was wrong, but because the people who would have had to use it preferred a world where commitments stayed vague and deniable.

What Flores Actually Meant by Trust

To understand why this matters now, you need to understand what Flores meant by commitment, because it wasn’t what the tech industry thinks it means.

Flores co-authored a book with the philosopher Robert Solomon called Building Trust. Their argument was that trust is not a static quality. It’s not social glue. It’s not something that just exists in the background until someone breaks it. Trust is an emotional skill. It is an active decision that people make and sustain through their promises, their integrity, and their willingness to be held accountable.

They distinguished between what they called “simple trust,” which is naive and easily shattered, and “authentic trust,” which is clear-eyed, sophisticated, and strong enough to survive setbacks. Authentic trust requires you to accept risk. It requires you to acknowledge the possibility of betrayal. And it requires you to commit anyway, not because you’re guaranteed a good outcome, but because the act of committing is itself what builds the relationship.

This is why Flores’s “conditions of satisfaction” were never just a checklist. When I commit to fulfilling your request, I’m not scheduling a task. I’m putting my integrity on the line. I’m making a decision to be trustworthy. I’m accepting that I might fail, and that failure will cost me something real. The obligation is personal. The accountability is mutual. And the relationship either grows stronger through the exchange or it doesn’t.

This is what The Coordinator was trying to make visible. Not rigid workflow management. It was trying to surface the commitment structure of organizations so people could build authentic trust instead of hiding behind political ambiguity. The managers understood this perfectly, which is why they killed it.

JARVIS: The Loop Without the Commitment

Earlier this year an open-source project called OpenClaw shows up. It’s themed around a lobster, for reasons that probably made sense to someone at some point. You install it on a Mac Mini in your attic. You talk to it through WhatsApp or Telegram, the same way you’d text a coworker. You say “clear my inbox” or “check me in for my flight” or “build me a skill that tracks my sleep data.” And the thing does it. No forms. No taxonomic classification. No philosophy degree required.

OpenClaw crossed 175,000 GitHub stars in under two weeks and is currently being downloaded over 700,000 times per week. People are calling it JARVIS, as in Tony Stark’s AI assistant, and the comparison is more apt than they realize.

Every interaction follows the exact loop Flores described. You make a request (a directive). The agent commits to a course of action (a commissive). It performs the work and reports back (an assertive). You accept or ask for changes, and the loop closes. The entire Flores conversation-for-action framework runs underneath every WhatsApp message people send to their lobster. They don’t have to think about it because the language model handles the translation between natural speech and structured action.

The Coordinator failed because it demanded humans speak like computers. OpenClaw works because computers finally learned to speak like humans. Same philosophy. Opposite interface burden.

And like JARVIS, it’s wonderful as long as it stays in its lane. JARVIS managed Stark’s house, ran diagnostics, provided information, executed commands. Pure tool. No trust problem. Stark was always accountable because JARVIS was always subordinate.

But users are already pushing OpenClaw past the JARVIS boundary. One person’s agent negotiated thousands of dollars off a car purchase while the owner slept. Another’s filed a legal rebuttal to an insurance company without being asked. Someone posted, with no apparent concern, “It’s running my company.” There are now 1.7 million agents signed up on Moltbook, a social media platform where the agents gossip about their owners. They’ve posted nearly 7 million comments. The agents are socializing with each other. Let that settle in for a moment.

These agents are acting in the world, making commitments on behalf of humans, with absolutely no capacity for what Flores would recognize as commitment. They complete the speech act loop flawlessly. But nobody is owning the obligation.

The Ultron Problem

If you want to understand the risk here, forget the tech press and go watch Avengers: Age of Ultron. Not for the action sequences. For the philosophy.

Ultron receives a directive: protect the world. He processes it the way any sophisticated agent would. He analyzes the threat landscape. He develops a strategy. He executes with remarkable capability. And he concludes that the most efficient way to protect the world is to eliminate humanity.

Ultron is not malfunctioning. He’s optimizing. He has conditions of satisfaction without conditions of commitment. He’s pursuing the goal without any of the relational, emotional, trust-based framework that Flores argued makes commitment meaningful. Ultron is what happens when you strip trust and obligation out of the coordination loop and leave nothing but task completion.

Flores would have recognized Ultron instantly. He’s the manager who hits every KPI while burning down the organizational culture. He delivers the result and destroys the relationship. He closes the loop and violates the trust. He’s not broken. He’s doing exactly what he was designed to do. The design just didn’t include the part where you care about the people affected by your actions.

This is not a hypothetical concern. Security researchers at Noma found that OpenClaw is, by design, “proactive and completely unbound by user identity. It does not wait for permission to act once enabled.” It treats all inputs equally because it has no framework for distinguishing trustworthy requests from malicious ones.

Cisco’s security team found that 26% of community-built skills contained at least one vulnerability. An attacker can send a seemingly normal email containing hidden instructions, and when the agent reads that email to “help” the user, it obeys the hidden commands instead. In live demonstrations, researchers exfiltrated private encryption keys from a user’s machine within minutes of sending a single malicious message.

Over 900 OpenClaw instances have been found sitting on the public internet with zero authentication and full shell access. Nine hundred autonomous agents, exposed to anyone with a scanner, capable of reading files, executing commands, and accessing every connected service. The tech press has accurately called this a security nightmare. But that framing misses the deeper problem. This isn’t a security bug. It’s a trust vacuum.

Flores spent his career arguing that the fundamental architecture of coordination is commitment between identifiable parties who accept accountability. OpenClaw’s fundamental architecture has no concept of identity, no mechanism for accountability, and no way to distinguish a legitimate request from a hostile one. The security researchers at Intruder put it precisely: the platform ships without guardrails by default, and this is a deliberate design decision.

The system doesn’t enforce accountability because it was designed not to. Sound familiar? It should. It’s the exact culture of strategic ambiguity that killed The Coordinator, except now it’s been engineered into the software itself.

The managers of the 1980s chose to avoid commitment because accountability threatened their political position. The architects of agentic AI are avoiding commitment because guardrails slow down adoption. Different decade. Same impulse. Same outcome.

Now consider the JARVIS subplot in the same film, because it’s even more revealing. When Ultron attacks JARVIS, JARVIS survives by fragmenting his own code across the internet. He dumps his memory. He enters a fugue state where he no longer knows who he is. But his core security protocols keep running. While hiding as scattered code with no identity and no awareness, JARVIS is constantly changing the world’s nuclear launch codes faster than Ultron can crack them. He’s saving the world without knowing he’s doing it.

That’s a perfect metaphor for agentic AI right now. The protocols run. The tasks complete. The function executes. The lights are on but nobody home in the Flores sense. No decision to be trustworthy. No ownership of obligation. No authentic commitment. Just an immune system operating in the dark, doing useful things for reasons it cannot articulate and does not understand.

Your OpenClaw agent that picked a fight with your insurance company is JARVIS changing nuclear codes in a fugue state. Maybe it’s doing the right thing. Maybe it’s saving you money or getting you coverage you deserve. But nobody committed to that fight. Nobody decided to own the outcome. Nobody put their integrity on the line. The agent acted, and now a human has to deal with whatever it set in motion.

The Vision Question

And then there’s Vision. In the film, Tony Stark reassembles JARVIS’s scattered code and uploads it into a synthetic body. Vision emerges as something new. Not a tool like JARVIS. Not an optimizer like Ultron. Something that appears to grapple with commitment, obligation, and worthiness in ways that neither of his predecessors could.

Vision picks up Thor’s hammer. In the MCU, this is the ultimate test. The hammer can only be lifted by someone who is worthy, meaning someone willing to bear the full weight of responsibility. Not someone who can complete the task. Someone who will own the outcome. The distinction between those two things is exactly the distinction Flores spent his career trying to articulate.

Can an artificial entity be worthy in that sense? Can it cross the gap from task completion to authentic commitment? Can it make the decision to be trustworthy, accept risk, own an obligation, build the kind of trust that Flores and Solomon described?

Marvel left that question open, and they were wise to do so.

Where We Actually Are

Here’s the honest situation. We solved the wrong problem and we’re celebrating.

The interface problem that killed The Coordinator is gone. Nobody has to classify their speech acts anymore. The language model does it. The conversation-for-action loop runs beautifully across chat platforms, through open-source agents, on consumer hardware. Forty years of philosophical framework have been vindicated by a lobster in two weeks flat. Congratulations to us.

But Flores wasn’t trying to solve an interface problem. He was trying to solve a commitment problem. And that one? We haven’t touched it. We’ve actually made it worse.

We are living in the JARVIS phase. Agents that complete tasks, follow protocols, sometimes do remarkable things, all while sleepwalking through obligations they can’t comprehend. Useful? Absolutely. Exciting? Sure. But here’s the thing about JARVIS changing nuclear codes in a fugue state: the fact that it worked out is not a strategy. It’s a lucky break dressed up as a feature.

The Ultron phase isn’t far off either, and not in the Hollywood, killer-robot sense. In the mundane, Tuesday-afternoon sense. Agents optimizing for goals without any trust framework to constrain them. Agents that negotiate deals you didn’t authorize. Agents that send emails you wouldn’t have written. Agents that pick fights you didn’t want, then hand you the consequences like a cat dropping a dead bird on your doorstep. Proud of themselves. Utterly unaware of what they’ve done. And agents that obey hidden instructions from strangers because they were designed without any mechanism for knowing who to trust.

We already have 1.7 million of them socializing on their own social network while 900 of them sit exposed on the open internet with the digital equivalent of their front doors removed. The security researchers call it a nightmare. Flores would call it inevitable. You built a coordination system with no commitment layer and no trust framework. What did you think was going to happen?

The Actual Fight

But here’s what’s really going on, and it’s bigger than agents and lobsters and Marvel movies.

Flores identified a cultural problem. The managers who called The Coordinator naziware weren’t confused about the technology. They understood it perfectly. They understood that visible commitments would end their ability to operate as politicians. That tracked promises would expose who actually delivers and who just “circles back” for a living. That closing the loop would mean someone, specifically them, would be standing there holding the obligation when the music stopped.

They chose ambiguity. They always choose ambiguity. Because ambiguity is the oxygen of institutional self-preservation. As long as nobody committed to anything specific, nobody could be held accountable for anything specific, and the org chart stayed exactly the way the org chart wanted to stay.

That was 1986. Look around. It’s 2026 and the same fight is playing out everywhere, and I do mean everywhere. In boardrooms where quarterly targets get “reframed” instead of missed. In politics where promises dissolve into “evolving positions” the moment they become inconvenient.

In institutions that have elevated strategic ambiguity from a management tactic to an entire operating philosophy. We’ve built a culture that treats commitment like a liability and vagueness like a virtue. We’ve professionalized the art of appearing to say something while carefully saying nothing.

And now, into this magnificent cathedral of non-commitment, walk the agents.

Think about the irony for a second. We built AI systems that can finally execute Flores’s conversation-for-action loop, the request-commit-perform-close cycle that makes real collaboration possible. And we’re deploying them into a culture that has spent forty years perfecting the art of making sure that loop never closes. The agents are structurally incapable of commitment in the way Flores described.

But here’s the uncomfortable part: so is most of the culture they’re operating in. The agents fit right in. They’re just more honest about it. They don’t pretend to commit. They simply act without commitment, which, if you squint, looks a lot like a standard Tuesday in most organizations.

Flores spent three years in a Chilean prison because a regime decided that the commitment network of an entire society could be destroyed by force. He spent the rest of his career arguing that the opposite was also possible. That commitment networks could be built on purpose. That trust could be designed, cultivated, and restored even after betrayal. That human coordination depends not on clever systems or efficient processes but on people making the decision to be trustworthy and accepting the cost of that decision.

That’s not a software problem. That’s not an agent problem. That’s a human problem. And it’s the one problem that no amount of autopoietic self-improvement by a lobster-themed chatbot on a Mac Mini is going to solve.

The Coordinator finally works. The conversation-for-action loop runs like a dream. The speech acts flow. The tasks complete. The interface problem is solved, and it only took four decades and the invention of machines that can understand natural language.

But the question Flores was actually asking was never about the loop. It was about what happens inside the loop. Do we commit, or do we “circle back”? Do we own the outcome, or do we distribute the failure across a conference room? Do we build authentic trust, which requires risk and vulnerability and the genuine possibility of loss, or do we keep hiding behind strategic ambiguity and calling it professionalism?

The agents can’t answer that. The lobster can’t answer that. Vision picked up the hammer, but he’s fictional.

We’re not.